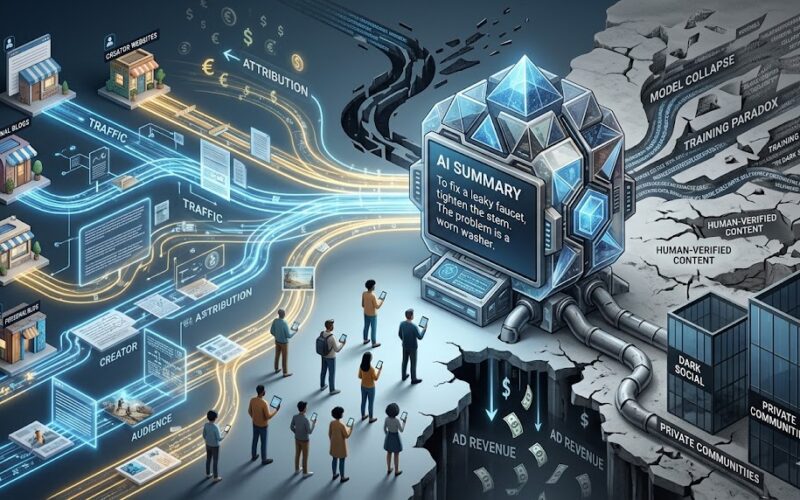

The internet has always operated on a silent contract: you provide high-quality content, and in exchange, search engines provide you with an audience. Whether through ad revenue, affiliate links, or brand recognition, that traffic is the lifeblood of creators, developers, and writers.

But as AI summaries become the default interface for search, that contract is being rewritten—without the creators’ consent.

The “Zero-Click” Dead End

The convenience of an AI summary is undeniable. If you need to know how to fix a leaky faucet or the best way to manage a complex backend database, a concise paragraph is faster than scrolling through a 2,000-word article.

However, this creates a “Zero-Click” reality. When the AI delivers the “answer” directly:

- Ad revenue vanishes: No page visit means no impression.

- Attribution fades: Users rarely check the tiny citations at the bottom.

- The “Human” is lost: The personal connection between a reader and a blogger—the community aspect—evaporates.

The Training Paradox

This leads to a systemic threat often called Model Collapse. If independent creators stop posting technical guides or personal insights because they can’t pay their bills, the “data well” runs dry.

AI models do not “know” things; they predict patterns based on what humans have already written. If we stop feeding the web with fresh, human-verified content, future AI models will be forced to train on the output of previous AIs. This creates a feedback loop of degradation where information becomes more generic, less accurate, and completely detached from real-world updates.

A Shift in Value

We are reaching a crossroads. If creators aren’t rewarded with income or credit, the incentive to share knowledge publicly disappears. We might see a retreat into “Dark Social”—private communities, paywalled newsletters, and gated forums—where AI scrapers can’t reach.

The very tool designed to make information more accessible might, in the long run, make high-quality, original information much harder to find.